- Part 1: Introduction

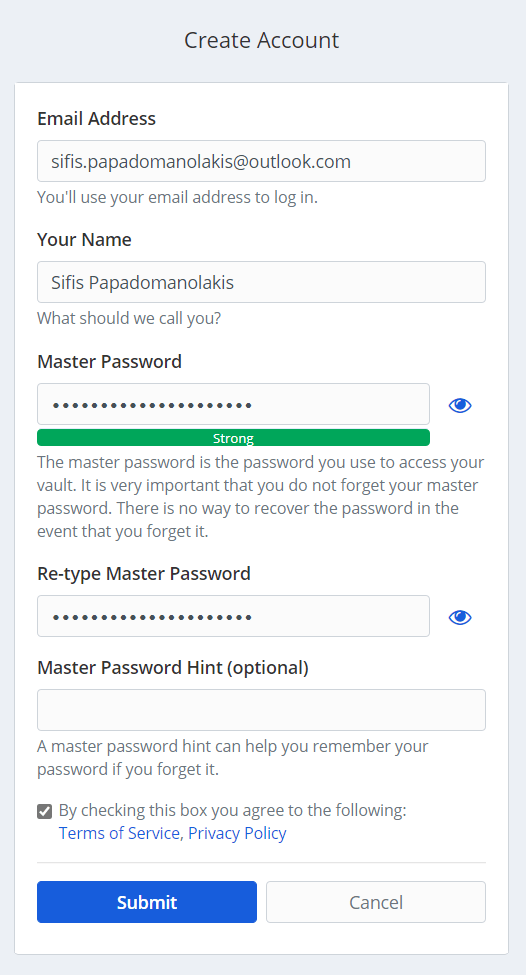

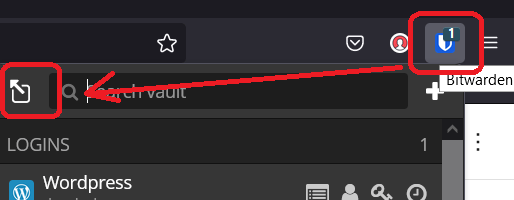

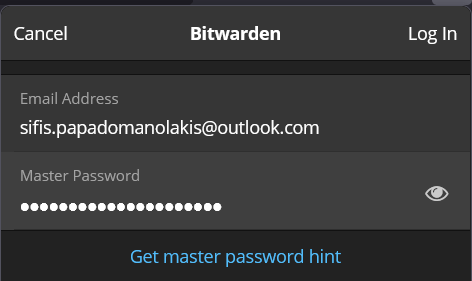

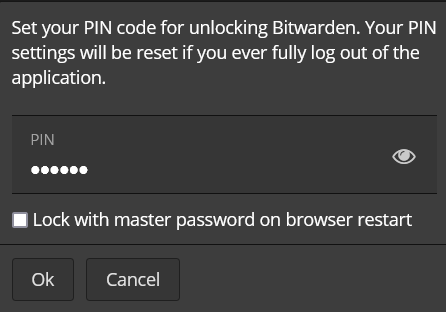

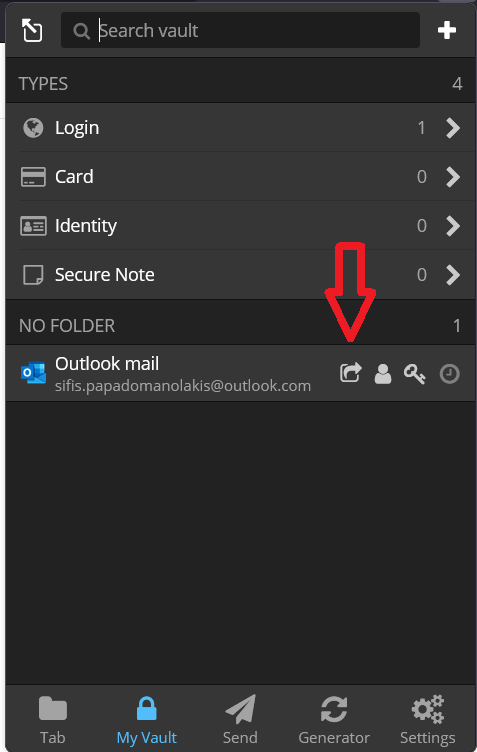

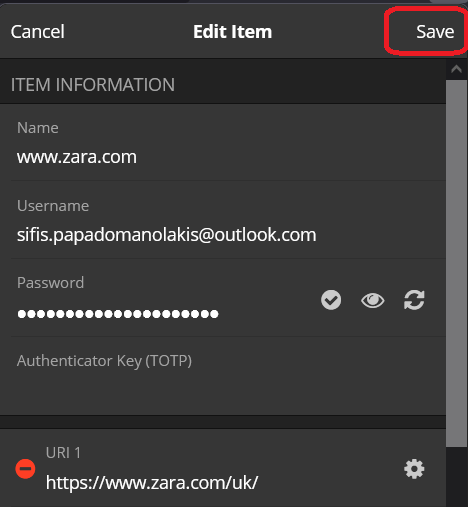

- Part 2: Store your passwords

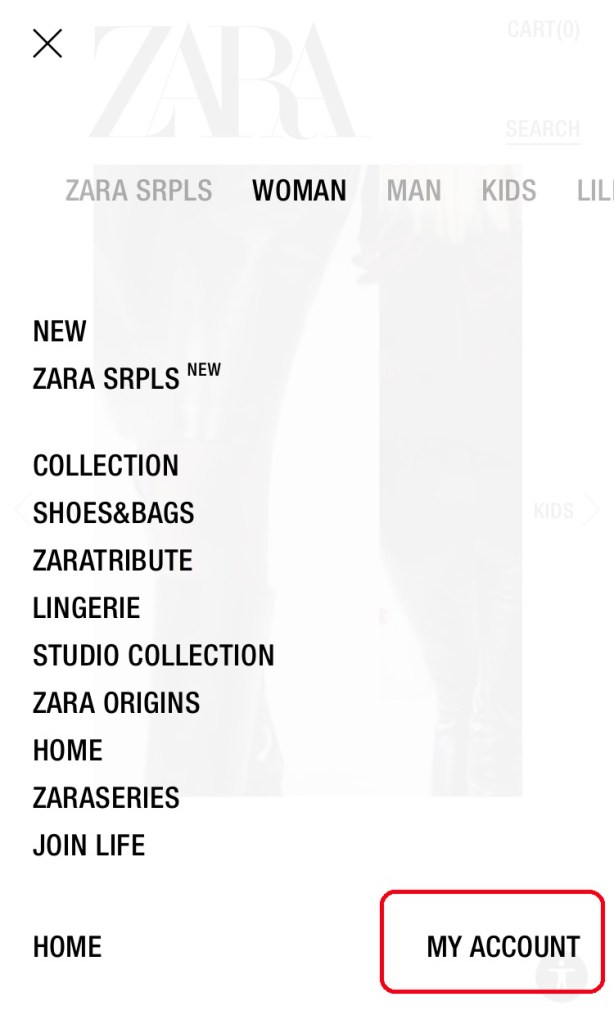

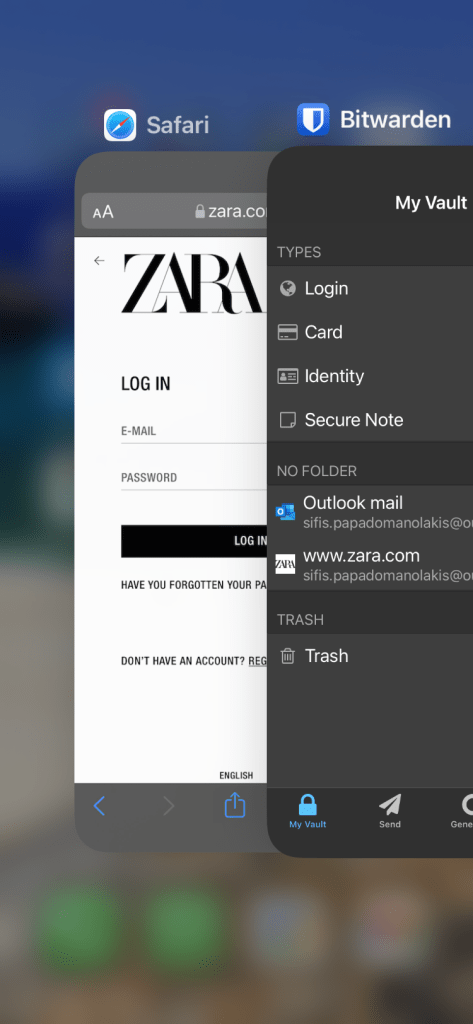

- Part 3: Now on your phone

Let’s start from the very beginning. First, I’ll explain a few things you’ll hear often. A lot of these words can seem daunting but actually are quite simple. Then we get down to the nitty gritty.

I DON’T WANT TO DO THIS WHY DO I NEED TO DO THIS???!??!

Because there are some things that you 1) want to be able to do on the internet but 2) don’t want other people to be able do (at least not without you knowing).

You don’t want other people to move money from your bank account. Or buy things with your credit card. You get the idea.

But but but I already have a password!

Yes, you do. But there are some problems.

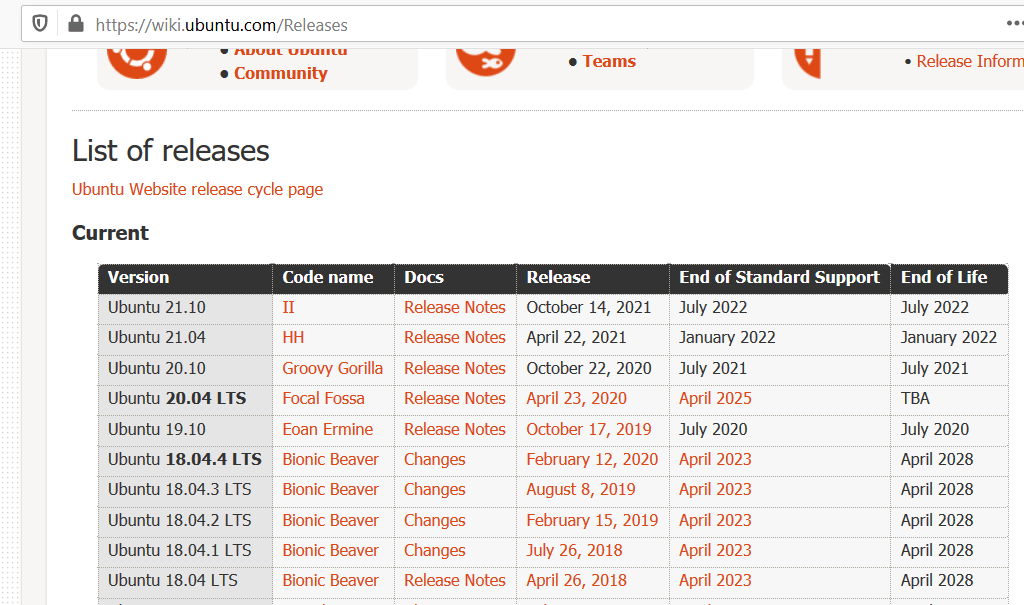

If you’re, well, human, you can remember some things but not many and not very well (read this if you don’t believe me). And it’s 2021, if you don’t live under a rock you have at the very least 10-20 accounts in different services, like your bank, your email etc etc. Try to count them and write in the comments how many you found 😊

The other problem is: criminals steal data from these services. A lot. Like, in the billions. Estee Lauder had a breach on February 2020 where 440 million records -data about people- were stolen. MGM Resorts, which you know from the casino in “Ocean’s 11”, had personal information about more than 10 million guests stolen. And these are just 2 of the around 3000 data breaches that were reported in 2020 in the US alone.

What this means is that your password will get stolen and there’s nothing you can do about it. Well, almost nothing. You can and should do 3 things:

- Have a unique password per service. This way, when your H&M password is stolen, it cannot be used to pay from your PayPal.

- Use random passwords. For crying out loud, do not use your phone number. You think that adding a few letters here and there makes it safe. It does not. A computer with a program you can download for free can crack your “safe” password in like an hour. The password must be long and random, something like g5D9C467YxeEfAmqL. You get the idea.

- Use 2-factor authentication. Since this post is already long, I’ll get to this in a later one.

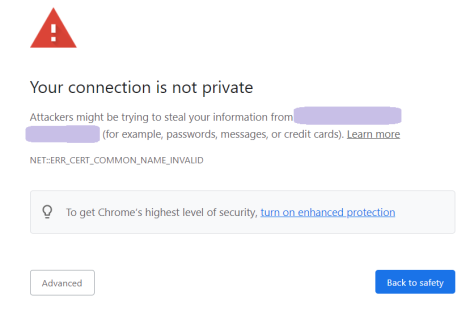

What does “authentication” mean? And what are these “credentials” I keep hearing about?

Credentials just means whatever you need to give to a service, like a web site, so that it checks it’s really you. Some of it is secret, some of it is not. Usually it’s a username and a password but it might be more, like your fingerprint or a code that you receive in your phone.

Authentication is just the process that checks the credentials and lets you in (or not).

What’s a password manager?

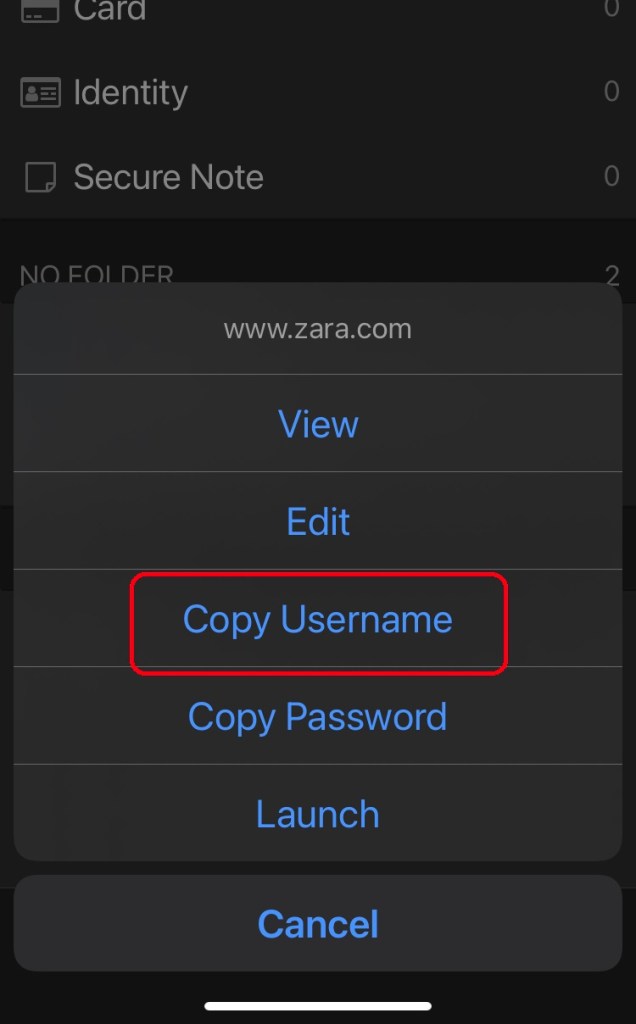

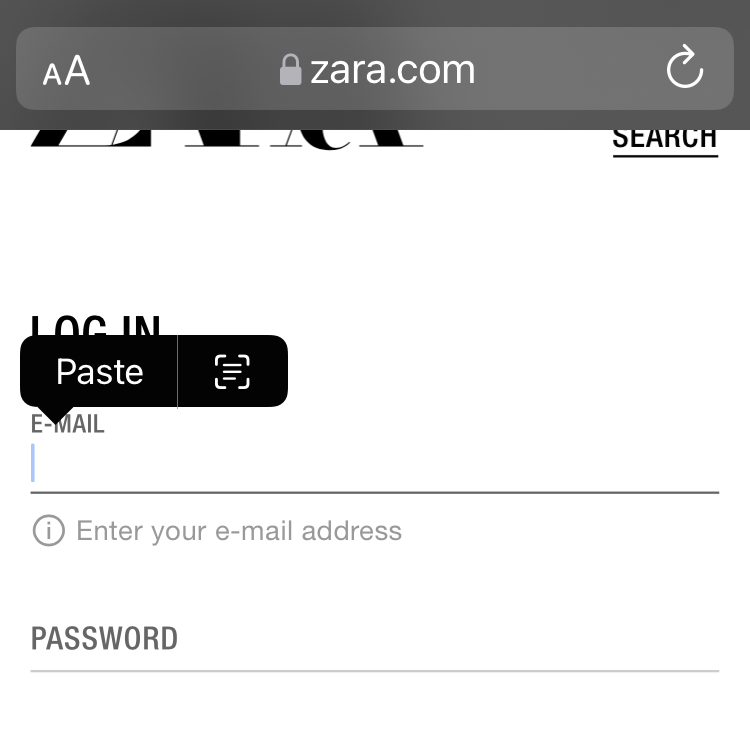

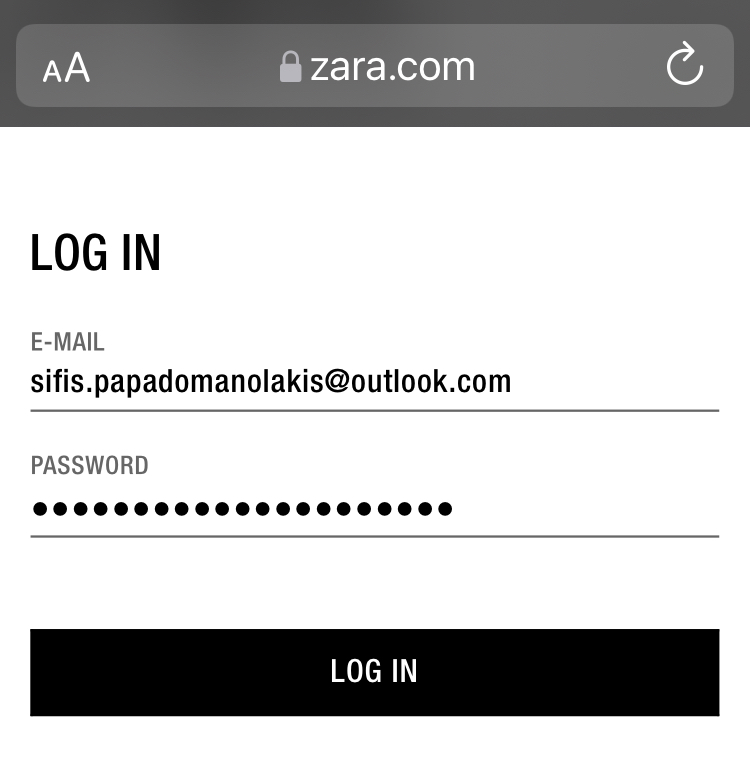

It’s a program that stores your credentials and helps you use them. Because your passwords must be long, it’s tedious to have to type them yourself. So the password manager for example can auto-fill them, or you can copy-paste them, in your e-banking web site.

Ok, ok, I’ll do it, but which one should I use?

There are many good password managers you can use like 1Password, LastPass, Devolutions, NordPass and others. Here I’ll use my favourite one which is Bitwarden, because it’s arguably the best free one and in my humble opinion the easiest to use.

Obviously this is just one way to do it; it works and it’s secure, but of course you can change things, for example use a different program. The main things to consider if you decide to use another one is:

- It should have both a computer as well as a smartphone application.

- It should be able to synchronize your credentials between them.

- It should be as simple to use as possible.

And how much time will it take?

Realistically, assuming you’re an average computer and smartphone user, for 5-10 web sites you’ll need around a couple of hours from start to finish. Obviously if you have dozens it will take more -not proportionally- but it’s also worth more. If you get stuck, write me in the comments and I’ll do my best to help.

UPDATE: some friends suggested that instead of doing all your sites at once, it makes the effort more manageable to do the most important ones first -e-banking, email etc. The rest you can do when you come across them in everyday use.

Now I’ll explain how you do it in your computer and smartphone. Ready, set, go!